Kernel Cave Explorers 🥾

At first, everything was fine

- An usual November morning; at the office with the team

- Let's talk about the team!

- Three developers;

- Golang / Svelte + "DevOps"

- 4-6 years of XP

- => Not really the type to hack the kernel

And suddenly, a manager

"It doesn't work!"

"I've got servers that just arrived, and the network doesn't work"

Uh... Ok 🤨

"It doesn't work!"

- It's the PKI team manager;

- Coming with a network issue;

- On custom hardware;

- And asking devs to fix it.

Actually, it kind of makes sense

- The PKI team has a specific need (a full-scale lab), so they ordered hardware;

- Facing the issue, they asked the network team (who couldn't figure it out);

- So, we're the last hope.

We check the BIOS...

... And we can see the network cards! 🎉

"Easy, it's just a driver!"

We install Debian 12

- Unsurprisingly, it doesn't work

- The cards show up in

lspci, but not withip link - We grab the errors from

dmesg

[ 9.526144] QLogic FastLinQ 4xxxx Core Module qed

[ 9.531195] qede init: QLogic FastLinQ 4xxxx Ethernet Driver qede

[ 9.583755] [qed_mcp_nvm_info_populate:3374()]Failed getting number of images

[ 9.583758] [qed_hw_prepare_single:4722()]Failed to populate nvm info shadow

[ 9.583761] [qed_probe:513()]hw prepare failed

And we learn some things

- The kernel does recognize our cards! (but then it fails)

- Apparently,

qedandqedeare the drivers for these cards; - Each card should appear as 4 x 25Gbps cards, totaling 100Gbps;

- And the driver crashes during card initialization.

There must be a system driver

- We start searching the internet for a driver for our cards;

- We find drivers from HPE...

- ... Which are just repackaged

qed/qede... - ... For old kernel versions (5.x)...

- ... And only for RHEL and SUSE.

Well, that's not going to help us.

Still interesting though:

- The drivers are for RHEL 7 and SUSE 12 (so "old")

- => The kernel drivers should work natively, otherwise there would have been dedicated support!

- Could this be a Debian distribution bug?

So we try installing a bunch of things!

- Ubuntu (24.04) because "it has every driver in the world" => Nope.

- CentOS (Stream) because "it's just an unstable RHEL clone" => Nope.

- Alpine Linux (3.18) because "you never know, maybe it'll just work..." => Nope.

At this point, we want to give up.

Nothing works, and there's no driver available.

We go back to our usual work

- We keep one server on the side, just in case an idea comes up.

And then, the silly idea 💡

(and it was mine, heh)

"Hey, what if we try that old Ubuntu 18?"

"Florian that's totally stupid, Linux never* breaks backward compatibility, you know that tr... Oh, it works"

Ubuntu 18 => Goal!

root@ubuntu-18-lts:~# ip a

[...]

6: ens2f0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN [...]

link/ether xx:xx:xx:xx:xx:xx brd ff:ff:ff:ff:ff:ff

7: ens2f1: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN [...]

link/ether xx:xx:xx:xx:xx:xx brd ff:ff:ff:ff:ff:ff

8: ens2f2: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN [...]

link/ether xx:xx:xx:xx:xx:xx brd ff:ff:ff:ff:ff:ff

9: ens2f3: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc mq state DOWN [...]

link/ether xx:xx:xx:xx:xx:xx brd ff:ff:ff:ff:ff:ff

[...]

And there, we're torn between joy and tears

- It works!

- But on an OS that's years old.

Doing random things works, let's keep going!

- We grab the kernel version from our Ubuntu 18 (4.15);

- And boot our Debian 12 on kernel 4.15.

- And... it works. 👀

- Just to be sure, we recompile 4.15 from source... It works too!

Jazz music stops

- The bug is somewhere in the kernel.

- But how do we find it?

- Ubuntu 18: Kernel 4.15

- Debian 12: Kernel 6.1

- That's 4 years of commits on the world's largest project!

The million dollar question:

In which version does the bug appear?

When did it break?

- Name & Shame

- Try to find the exact spot to identify the root cause

- => And make the fix easier!

When did it break?

- Between 4.15 and 6.1, there are 28 versions (not counting patch releases)

- Idea: jump from LTS to LTS and see where it breaks.

- LTS => Several years of support. Non-LTS: 6 months.

- => The LTS after 4.15 is 4.19

4.19 on Debian 12 -> Fail!

- So our bug is between 4.15 and 4.19!

4.17 on Debian 12 -> Fail!

- It's somewhere between 4.15 and 4.17

4.16 on Debian 12 -> It works!

- So the bug is somewhere in the commits between 4.16 and 4.17! Time to dig into the commits.

And we find it pretty quickly!

- Commit 43645ce adds the

function

qed_mcp_nvm_info_populate - And that's the function causing issues according to the logs:

[ 9.526144] QLogic FastLinQ 4xxxx Core Module qed

[ 9.531195] qede init: QLogic FastLinQ 4xxxx Ethernet Driver qede

[ 9.583755] [qed_mcp_nvm_info_populate:3374()]Failed getting number of images <== ICI !

[ 9.583758] [qed_hw_prepare_single:4722()]Failed to populate nvm info shadow

[ 9.583761] [qed_probe:513()]hw prepare failed

To confirm, we recompile

Time to fix it

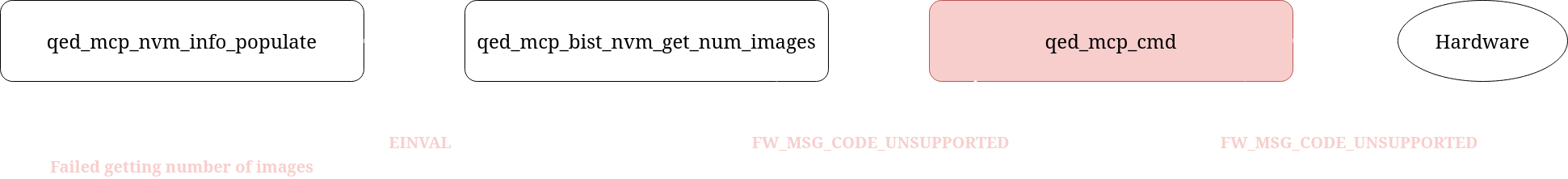

- We look at the failing function:

qed_mcp_nvm_info_populate - Using the log, we find where it lives

/* Acquire from MFW the amount of available images */

nvm_info.num_images = 0;

rc = qed_mcp_bist_nvm_get_num_images(p_hwfn, p_ptt, &nvm_info.num_images);

if (rc == -EOPNOTSUPP) {

DP_INFO(p_hwfn, "DRV_MSG_CODE_BIST_TEST is not supported\n");

goto out;

} else if (rc || !nvm_info.num_images) {

DP_ERR(p_hwfn, "Failed getting number of images\n");

goto err0;

}

Time to fix it

int qed_mcp_bist_nvm_get_num_images(struct qed_hwfn *p_hwfn, struct qed_ptt *p_ptt, u32 *num_images) {

u32 drv_mb_param = 0, rsp;

int rc = 0;

drv_mb_param = (DRV_MB_PARAM_BIST_NVM_TEST_NUM_IMAGES << DRV_MB_PARAM_BIST_TEST_INDEX_SHIFT);

rc = qed_mcp_cmd(p_hwfn, p_ptt, DRV_MSG_CODE_BIST_TEST, drv_mb_param, &rsp, num_images);

if (rc)

return rc;

if (((rsp & FW_MSG_CODE_MASK) != FW_MSG_CODE_OK))

rc = -EINVAL;

return rc;

}

Time to fix it

- We have two branches, so we add logs on both

ifs to print the values ofrcandrsp - Turns out,

rspdoesn't have the expected value... - Its value is

0x00000000 - Looking at

qed_mfw_hsi.hwe find that this code... - ... Means the operation is not supported!

Time to fix it

- Remember, in

qed_mcp_nvm_info_populate, we had this:

if (rc == -EOPNOTSUPP) {

DP_INFO(p_hwfn, "DRV_MSG_CODE_BIST_TEST is not supported\n");

goto out;

}

... Except if nobody ever returns EOPNOTSUPP, it's pretty useless!

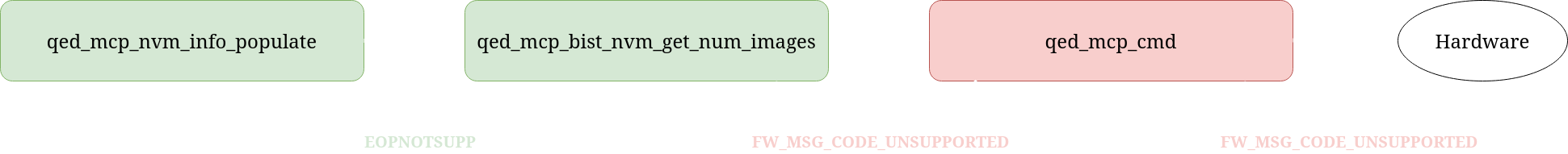

Time to fix it

- We just add a simple check in

qed_mcp_bist_nvm_get_num_images:

int qed_mcp_bist_nvm_get_num_images(struct qed_hwfn *p_hwfn, struct qed_ptt *p_ptt, u32 *num_images) {

u32 drv_mb_param = 0, rsp;

[...]

rc = qed_mcp_cmd(p_hwfn, p_ptt, DRV_MSG_CODE_BIST_TEST, drv_mb_param, &rsp, num_images);

if (rc)

return rc;

+ if (((rsp & FW_MSG_CODE_MASK) == FW_MSG_CODE_UNSUPPORTED)) // <= Là :-)

+ rc = -EOPNOTSUPP;

~ else if (((rsp & FW_MSG_CODE_MASK) != FW_MSG_CODE_OK))

rc = -EINVAL;

return rc;

}

We recompile...

- Version 6.12 (latest), with our fix...

And it works!

We saved our servers! 🎉

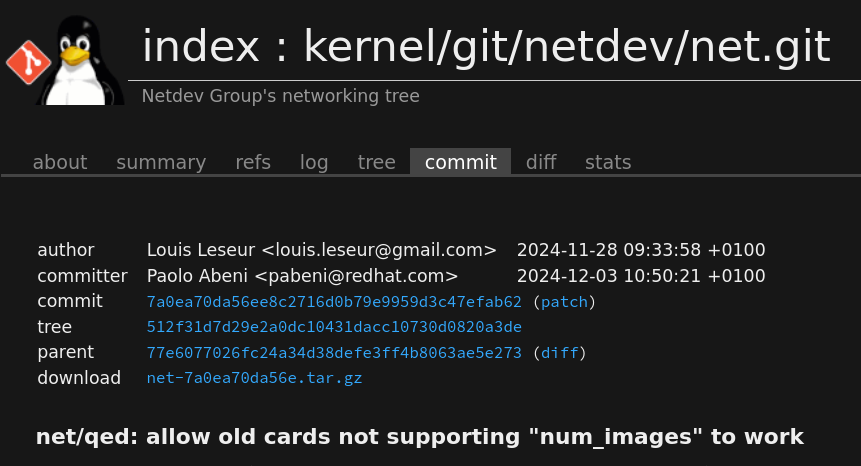

- We submit our fix to the kernel so everyone can benefit

- And send our patch to the kernel lore

And after a few weeks...

- Our submission is approved, and we land in the codebase! (in 6.13)

- Fun fact: this fix will be backported all the way to LTS 5.4 (2019)!

To wrap up...

- We got lucky in our debugging process.

- Linux is awesome; and being open-source makes it even better!

- No system is flawless, not even the best one.

- If three goblins managed to do it, so can you! Contribute to OSS. ❤️